MLOps from a bird's-eye view: how to start

April 26, 2024

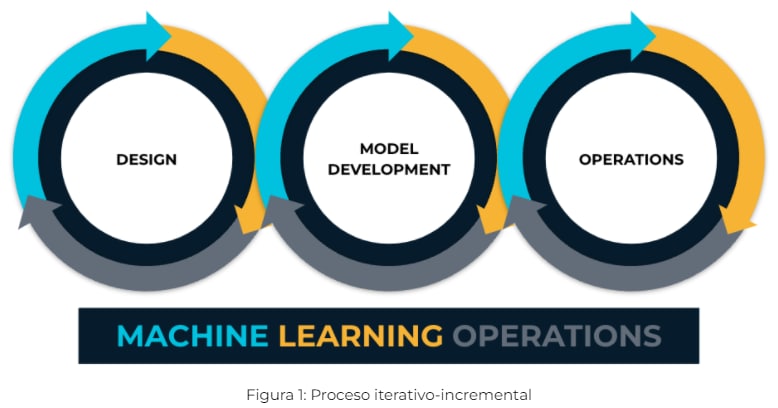

As many of us who have been studying Machine Learning (ML) technologies know, these began as research projects and became instrumental in facilitating business improvement and growth for data-driven businesses. Being able to adapt something purely academic to a software industry as evolved as today's has meant breaking many barriers and beliefs, keeping in mind agile best practices and the trend of combining development (Dev) and IT operations (Ops) stimulated by the DevOps paradigm. This is the premise for discussing Machine Learning Operations (MLOps). In Figure 1 we can see how a three-phase cycle gives way to MLOps.

As expected, the rise of MLOps and their widespread adoption worldwide requires a systematic and efficient approach toward building robust ML systems, which results in an increased need for ML Engineers. They are in charge of applying the best DevOps practices to ML technologies, in addition to building pipelines that sequentially go through different stages, from the need to give answers and obtain data to providing solutions that evolve as they are used.

If we look at the main public cloud providers such as Google Cloud, AWS, and Microsoft Azure, they have made great steps in this direction by creating certifications according to the needs of this area. Google Cloud offers a Professional Machine Learning Engineer certification(https://cloud.google.com/certification/machine-learning-engineer) that describes engineers as designers, creators, and product experts who can build ML models aimed at tackling a great variety of business challenges.

Adaptation for AI

Many companies are working hard to build ML solutions according to the standards set by the AI community and the different solution providers. There are three typical phases (B1) in AI companies and each one increases the complexity of the solution:

- Tactical phase: manual development of AI models.

- Strategic phase: continuous training of the model by automating the ML pipeline.

- Transformational phase: fully automated processes support AI development.

For more information, please go to the official documentation of Google Cloud:

MLOps: Continuous delivery and automation pipelines in machine learning

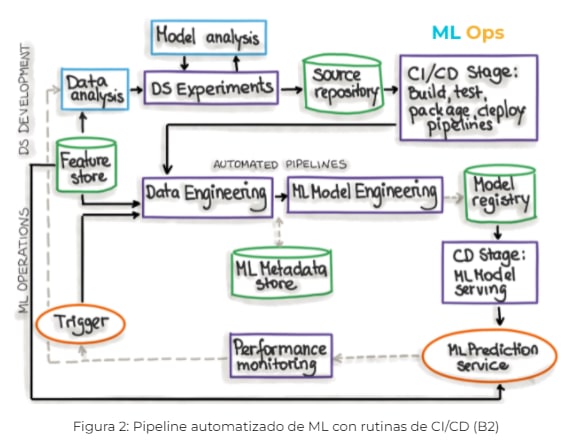

Regarding the last phase, we can see in Figure 2 how the ML part and the operational part (Ops) coexist:

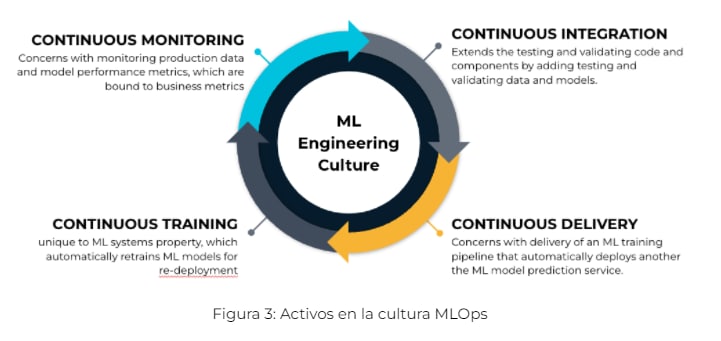

In addition to the above, to understand the implementation of a model, you need to identify what triggers the process, what hyperparameters are needed, when to run a training process, what are the appropriate data, the version of the different models, how and when to deploy a new artifact, and so on. MLOps-based processes consist of orchestration, logging, monitoring, and reporting tasks, which ensure that ML models, code, and data artifacts are stable. MLOps is an ML engineering culture that includes four fundamental stages, as displayed in Figure 3.

People in Model Operations

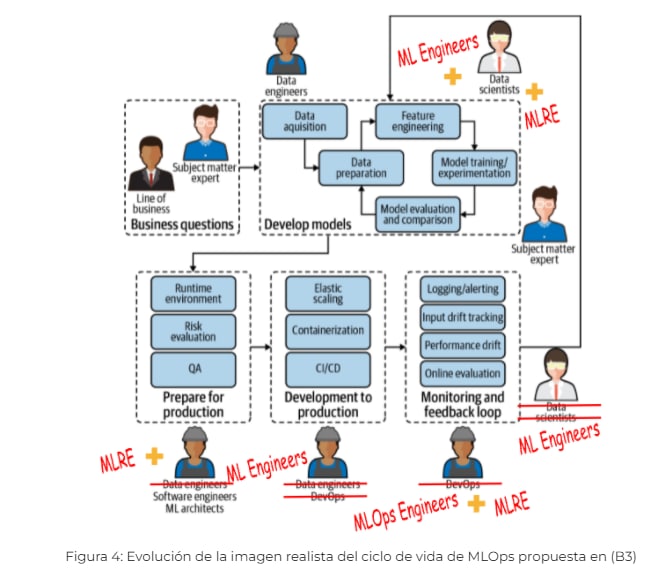

As mentioned, Machine Learning engineers are the main actors in the development of ML pipelines and the main operational stages. But they don’t work alone, since many requirements need to be met for operationalizing machine learning models (MLOps).

At Sngular Data & AI, we believe that the MLOps cycle requires several specialists, and we added three roles to the classic scheme (Figure 4), following the trends of the best known public cloud providers:

- ML Engineer: must be an expert in data collection, data verification, feature engineering, metadata management, model analysis, etc.

- MLOps Engineer: able to grasp the DevOps side of things, including identity, role, grant and permission management, source control, and CI/CD pipelines.

- MLRE: an ML Site Reliability Engineering (SRE) specialist who combines system engineering and machine learning to develop and execute worldwide distributed AI/ML/DL.

In a future article, we will present the roles of ML Engineer, MLOps Engineer, and MLRE.

As this introduction may have suggested, MLOps possibilities are many and their complexity is constantly increasing, but these technologies offer so many advantages that it is worth undertaking the challenge. At Sngular Data & AI, we want to help you productize Artificial Intelligence, so we are starting a series of blog entries to delineate our vision.

References

(B1) “Machine Learning Design Patterns” por Valliappa Lakshmanan, Sara Robinson, Michael Munn.

(B2) “Practical MLOps” (https://ml-ops.org/content/mlops-principles#automation).

(B3) “Introducing MLOps” por Mark Treveil, Nicolas Omont, Clément Stenac, Kenji Lefevre, Du Phan, Joachim Zentici, Adrien Lavoillotte, Makoto Miyazaki, Lynn Heidmann.